|

| ||

| ||

|

Iterated Local Searchedited by Thomas Stützle and Luis Paquete, INTELLEKTIK ContentsAbout Iterated Local SearchThe essence of the Iterated Local Serach (ILS) metaheuristic can be given in a nut-shell: one iteratively builds a sequence of solutions generated by the embedded heuristic, leading to far better solutions than if one were to use repeated random trials of that heuristic. This simple idea has a long history, and its rediscovery by many authors has lead to many different names for ILS like iterated descent, large-step Markov chains, iterated Lin-Kernighan, chained local optimization , or combinations of these. For us, there are two main points that make an algorithm an ILS:

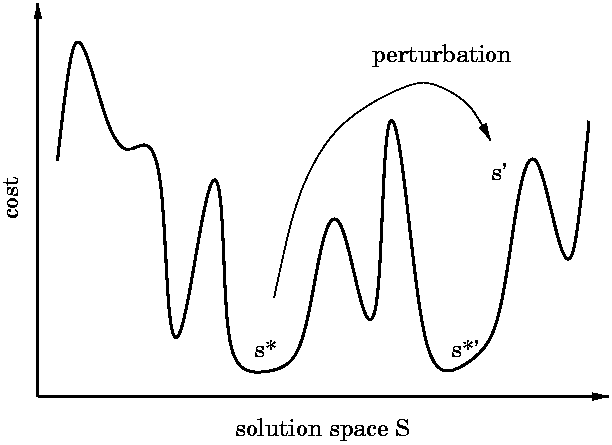

Idea ILS explores the search of local minima (denominated as S* ) w.r.t. to some given embedded heuristic, called LocalSearch in the following. ILS achieves this heuristically as follows. Given the current s*, we first apply a change or perturbation that leads to an intermediate state s' (which belongs to S). Then LocalSearch is applied to s' and we reach a solution s*' in S*. If s*' passes an acceptance test, it becomes the next element of the walk in S*; otherwise, one returns to s*. The resulting walk is a case of a stochastic search in S*, but where neighborhoods are never explicitly introduced. This ILS procedure should lead to good biased sampling as long as the perturbations are neither too small nor too large. If they are too small, one will often fall back to s* and few new solutions of S* will be explored. If on the contrary the perturbations are too large, s' will be random, there will be no bias in the sampling, and we will recover a random restart type algorithm. Pictorial View The overall ILS procedure is pictorially illustrated in the following figure. To be complete, let us note that generally the ILS walk will not be reversible; in particular one may sometimes be able to step from s(1)* to s(2)* but not from s(2)* to s(1)*. However this ``unfortunate'' aspect of the procedure does not prevent ILS from being very effective in practice.

Since deterministic perturbations may lead to short cycles (for instance of length 2), one should randomize the perturbations or have them be adaptive so as to avoid this kind of cycling. If the perturbations depend on any of the previous s*, one has a walk in S* with memory.

Procedural View

Written in a procedural way, ILS algorithms as metaheuristics have

the following high level architecture:

Staying within Markovian walks, the most basic acceptance criteria will use only the difference in the costs of s* and s*'; this type of dynamics for the walk is then very similar in spirit to what occurs in simulated annealing. A limiting case of this is to accept only improving moves, as happens in simulated annealing at zero temperature; the algorithm then does (stochastic) descent in S*. If we add to such a method a CPU time criterion to stop the search for improvements, the resulting algorithm pretty much has two nested local searches; to be precise, it has a local search operating on S embedded in a stochastic search operating on S*. More generally, one can extend this type of algorithm to more levels of nesting, having a different stochastic search algorithm for S*, S**, etc... Each level would be characterized by its own type of perturbation and stopping rule; to our knowledge, such a construction has never been attempted.

Power of ILS The potential power of ILS lies in its biased sampling of the set of local optima. The efficiency of this sampling depends both on the kinds of perturbations and on the acceptance criteria. Interestingly, even with the most naive implementations of these parts, ILS is much better than random restart. But still much better results can be obtained if the ILS modules are optimized. First, the acceptance criteria can be adjusted empirically as in simulated annealing without knowing anything about the problem being optimized. This kind of optimization will be familiar to any user of metaheuristics, though the questions of memory may become quite complex. Second, the Perturbation routine can incorporate as much problem-specific information as the developer is willing to put into it. In practice, a rule of thumb can be used as a guide: ``a good perturbation transforms one excellent solution into an excellent starting point for a local search''. Together, these different aspects show that ILS algorithms can have a wide range of complexity, but complexity may be added progressively and in a modular way. (Recall in particular that all of the fine-tuning that resides in the embedded LocalSearch can be ignored if one wants, and it does not appear in the metaheuristic per-se.) This makes ILS an appealing metaheuristic for both academic and industrial applications. The cherry on the cake is speed: one can perform k local searches embedded within an ILS much faster than if the k local searches are run within random restart. Readings

Links

| |

|

||

|

||

|

Network Coordinator: Marco Dorigo e-mail: mdorigo@ulb.ac.be Web site responsibles: Christian Blum and Max Manfrin |